Efficient learning

- Blog: towards data science

- Efficient Inference in Deep Learning - Where is the Problem?

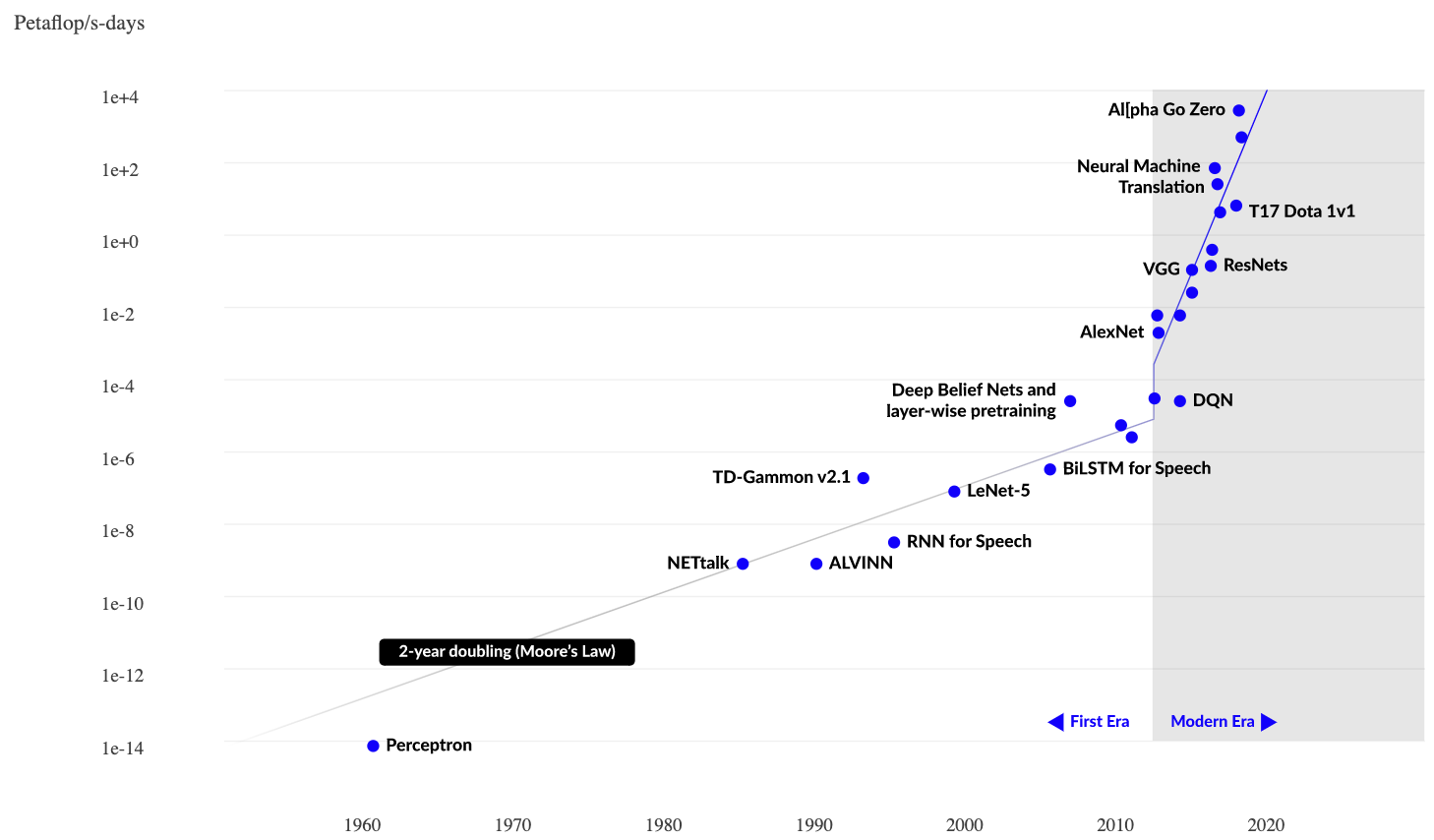

(2020, by Amnon Geifman) - Petaflops: $10^{15}$ floating point operations per seconds

- CPU: $\sim 0.1\cdot 10^{12}$

GPU: $\sim (1\!-\!10)\cdot 10^{12}$ - $\mathrm{day}\ \hat{=}\ 86.4\cdot10^3\,\mathrm{sec}$

- Moore's law:

performance of hardware doubles every 1.8-2 years

ML requirements: doubling every 3-4 months